#aws (2019-04)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Archive: https://archive.sweetops.com/aws/

2019-04-01

2019-04-02

2019-04-03

If your EBS backed EC2 instances are SHUTDOWN (not terminated) do you still pay for the EC2? I understand you’d still pay for EBS.

I want to turn off EC2s at night for non-core environments, using Lambda.

hello everyone, is there any way to invoke lambda func, when add/remove user into AWS account ?

@Maxim Tishchenko you can log user creation/deletion events to CloudTrail https://docs.aws.amazon.com/IAM/latest/UserGuide/cloudtrail-integration.html

Learn about logging IAM and AWS STS with AWS CloudTrail.

then send all those events to lambda https://docs.aws.amazon.com/lambda/latest/dg/with-cloudtrail.html

How to set up and start using the AWS Lambda service.

which will filter the required events

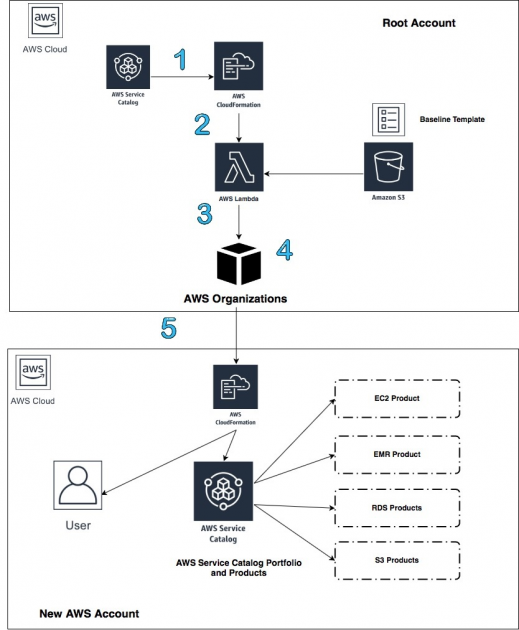

also interesting article https://aws.amazon.com/blogs/mt/automate-account-creation-and-resource-provisioning-using-aws-service-catalog-aws-organizations-and-aws-lambda/

As an organization expands its use of AWS services, there is often a conversation about the need to create multiple AWS accounts to ensure separation of business processes or for security, compliance, and billing. Many of the customers we work with use separate AWS accounts for each business unit so they can meet the different […]

Periodic Lambda function to alert when CloudTrail is not being delivered to an S3 bucket - alphagov/lambda-check-cloudtrail

@lvh

Periodic Lambda function to alert when CloudTrail is not being delivered to an S3 bucket - alphagov/lambda-check-cloudtrail

Big up UK govt dev team ^

@lvh has joined the channel

2019-04-04

If your EBS backed EC2 instances are SHUTDOWN (not terminated) do you still pay for the EC2? I understand you’d still pay for EBS.

EC2 instances accrue charges only while they're running

I think I read this before

And made me wonder if they meant running as in OS RUNNING / POWER ON

or running as in… it is created and say SHUTDOWN

When an EC2 instance is stopped you only pay for the EBS volumes you are using.

Thanks guys, that’s perfect then. No reason not to switch off the EC2s over night!

… The IPs wouldn’t change, right?

They will when you’re not using elastic ip’s

Hmmm, so on spin-up would need to run terraform to update the R53 records. Alright that will take a bit more effort

when teleport provide a full script with make file for terraform, but you wish it was just a provider because you only need an 8th of what they’ve shoved in it https://github.com/gravitational/teleport/tree/master/examples/aws/terraform

Privileged access management for elastic infrastructure. - gravitational/teleport

The UI stuffs nice for teleport but I think it’s going to give me a stroke. We took SSH proxying via a gateway and added 300 new things that can break

default size for the SSH proxy in that things a m4.large; 8gig of ram dual-core

“sets up the bastion using route53 and registers a cert with letsencrypt” … so it sticks the route to your bastion into public CT logs (using wildcards is better) and theres no config in that thing to restrict traffic to the bastion sooo we’re doing public ssh now

all just their example of course; you can do what you like in reality. Wish it was just an ansible module (only one around hasnt been touched in 11 months); at least it doesnt cripple normal ssh

so you have a backup when it facepalms

@Erik Osterman (Cloud Posse) with the cloudposse bastion what’s the deal with users. how can it say which user logged in if the volume mounts against 1 user (just going off the readme)

@chrism user management is outside of the scope of the project. There are dozens of ways to provision users with the #bastion

Yeah I mean if i have 9 users already there by other means how does the bastion map to those 9 users if its using volumes?

A bastion is a jump box

I know

Users should not be hanging out on it

:-)

lol no, but if I create jim, jane, alice with their own auth pubkeys do I have to map the bastion for all 3 users

See GitHub authorized keys project for inspiration

There’s a gist in the GitHub issues to how someone else did it with cloud formation

in comparison; if you setup teleport you add N users; its not mapping 1 auth key; it knows jims keys are x etal

Teleport handles SSO

Teleport is what we use :-)

Aye i’m sorta leaning that way at the moment; just not keen on the fat

feels like using a juggernaut to deliver a box of matches

We have open sourced our implementation of teleport with kops

Oh yes! It’s totally a hack job to use anything else but teleport

Plus with teleport you get easy YouTube style replays

it has lots of selling points; other than having to rejig the world

I assume you can do tedious stuff like create groups with it and say teamx can only access teamx’s machines

Yup

You can have groups

Teleport is beautiful. Inside and out.

It is a beast to setup the first time. All in all we have spent probably more than 2 months of man hours on it .

Wonder how many headaches getting our “the world is cisco” folks heads around that will be

I just used https://github.com/woohgit/ansible-role-teleport up front; slightly put off for the vsphere land as we use RoyalTS

Are you using dynamodb, IAM roles, s3 backend storage and SAML?

teleport would solve lots of the niggly “everything everywhere needs auditing” alongside the “if the grunts dont have ssh access to things I’ll have to debug everything”

For teleport auth and node?

no; I was using SSH proxy commands in a shell script and a bastion host as the lord-god-aws defined on the mount

The ansible script just uses tokens

I’ve setup a proxy/auth on the existing throwaway bastion to test it

and shoving a node on another box

Their repo’s terraform setup for aws consists of graphana/influx monitoring / dynamo/s3

our machines are supposed to be immutable; so the only reason you’d ssh in is to grab a log thats not already being exported or to diagnose an issue before burning the machine to the ground

We use RKE rather than KOPS

which will be more pleasant when ranchers finished its v2 terraform provider that seems to wrap rke + the cli

I am just implying that to do teleport the “right way” will most likely be a lot more work. While getting a POC up takes a day or two. :-)

yeah; tbh I just wanted to see how easy it was to throw up on the minimum settings. Nothing comes free

the base setup using tokens isn’t too bad; realistically as a 1/3 of our setup isn’t kubernetes and everything sits in ASGs long-life tokens are probably more necessity than a nicety

The rancher hardening guides if they’re of interest to anyone https://releases.rancher.com/documents/security/latest/Rancher_Hardening_Guide.pdf

sigh; im sold on teleport. Now to read everything

Terraform module to provision Teleport related resources - skyscrapers/terraform-teleport

Gravitational Teleport backing services (S3, DynamoDB) - cloudposse/terraform-aws-teleport-storage

We also have the Helmfiles

How do i deploy AWS ASG ec2 through terraform as a blue green deployment . i am thinking about diff types of methods

- Create a Launch template which update/ creates new ASG ,new ALB/ELB and switch the R53 domain to new

- Create a new Launch template ,, ASG and ALB and update and target ALB to existing R53

please suggest me best way

cross-posted, with an answer, at https://sweetops.slack.com/archives/CB6GHNLG0/p1554427553386300

2019-04-05

There’s numerous things in IAC where you start to think terraform isnt helping :face_with_rolling_eyes:

Create an aws_acm_certificate , then add an extra SAN.

Terraform sits there trying to destroy the old one… but the new ones only just been created; so everythings attached to it, it aint letting go

They didnt code in to check the api response that its in use so it just retries to death

lmao https://github.com/terraform-providers/terraform-provider-aws/issues/3866 good god its a “feature”

We are seeing an issue with using acm certificates during terraform destroy where the certificate is still seen as in use by a load balancer that was just deleted. Due to eventually consistent apis…

There’s numerous things in IAC where you start to think terraform isnt helping

yeah, the more the current crop of tools evolve, the more I miss using my old circa ‘09 framework that just wrapped the java cli tools……

“the infra is code because we -wrote- code”

I guess the idea is that not everybody has to invent that wheel anymore? I dunno. Certainly understand the feeling though

yeah - it’s just that they write the opinions to be so frameworked…er frameworks to be so opinionated… that if you had a worldview that doesnt fit the framework….

thoughts on aurora Postgres? im aware of all the improvements its supposed to give for roughly the same cost over vanilla RDS Postgres but wondering if anyones used it in production and what their thoughts are

We use it all the time, it’s very good

Synchronous replication with milliseconds latency

Many read replicas

nice

and costs? @Andriy Knysh (Cloud Posse)

more expensive/same/cheaper than vanilla RDS?

Now that Database-as-a-service (DBaaS) is in high demand, there is one question regarding AWS services that cannot always be answered easily : When should I use Aurora and when RDS MySQL? DBaaS clo…

For production where you need bigger instances, the cost is relatively the same. With plain RDS you can get smaller and cheaper instances, but that are just good for testing and maybe staging

are you using it for postgres or mysql

Both

we just talked to the solutions architect that said we can just restore the rds potgres snapshot to aurora and it will just work

Yes

did you find that to be true or did aurora change things

nice

Aurora changes things mostly in user and permissions management, and some other minor things

mmm

i am using alot of roles/databases in my rds instance

multi-tenant so i wonder if that will change things

E.g. even the master user you create is not the admin in the cluster

thanks @Andriy Knysh (Cloud Posse)!

i will test it out

Security with Amazon Aurora PostgreSQL.

aurora’s a bit more fun to codify setup, and to do instance up/downgrades (ie, you have to do each of them yourself. tip: just create new nodes in the cluster at the scale you want, then failover to them…)

if you make the mistake of scaling your write node, it will create an outage because aurora dont care.

oh, and I have a broke psql aurora node right now, it borked in prod, didnt failover. failed the cluster over, the node is still borked. can’t add support to the account because no one can get into the vault in the office where the root acct’s mfa key is.

It’s even worse with regular Postgres and MySQL :)

regular rds used to be a dream to update/scale with maz turned on - punch it and walk away, it’d update the slave, then failover and update the master.

let it trigger in the maint window if you want

2019-04-08

So looking at the reference architectures repository, there seems to be two accounts that seem to overlap:

root

The "root" (parent, billing) account creates all child accounts and is where users login.

Of note here is the “where users login.” There’s also an “identity” account:

identity

The "identity" account is where to add users and delegate access to the other accounts

I’m a bit unsure how this would look in reality. I’m not sure how you’d “login” to the root account, if your user is over in a separate account. Am I missing something? I thought in AWS your starting point always had to be wherever your IAM user existed, and from there you can assume roles in whatever fashion is needed.

We provide a stub of an identity account

but we currently provision all our customers using the root account as the identity account.

… in other words, we don’t have a configuration for the “identity” account besides the creation of it.

Okay, I’m curious what the future idea of it is then.

Like, would I put dev accounts there, and they’d “log in” there and assume roles from there?

that’s how I’d see it yeah. that would mean the default org account role assumption wouldn’t work, and require more specific setup. which isn’t strictly a bad thing, and would save having to -undo- the default setup….

yea, rather than stick user accounts or (SSO integrations) in the “root” (payer) account, we’d just provision it in the identity account instead.

The difference is just there’s a tad bit more effort in initial setup.

Does anyone have any experience with hosting images and videos that are optimal for each device? Is there an AWS service or an approach that’s better than generating 10 versions of an image and using S3/Cloudfront?

we recently deployed https://docs.aws.amazon.com/solutions/latest/serverless-image-handler/welcome.html. It uses http://www.thumbor.org/ to change image size/format/filter on the fly. Behind a CDN works ok and fast enough. It’s CloudFormation, not TF though

How to deploy the Serverless Image Handler. AWS CloudFormation templates automate the deployment.

Thumbor is a smart imaging service. It enables on-demand crop, resizing and flipping of images. It features a very smart detection of important points in the image for better cropping and resizing, using state-of-the-art face and feature detection algorithms (more on that in Detection Algorithms).

Thank you

Where can I find the CloudFormation template(s)?

(click Next a few times) https://docs.aws.amazon.com/solutions/latest/serverless-image-handler/template.html

AWS CloudFormation template that deploys Serverless Image Handler on the AWS Cloud.

Sorry & thanks

2019-04-09

@Andriy Knysh (Cloud Posse) what are the running costs like? we currently use imageresizing.net on iis (m3 mediums) x3 with cloudfront over it as we’re loading images from s3 etal

Overview of Serverless Image Handler.

As of the date of publication, the estimated cost for running the Serverless Image Handler for 1 million images processed, 15 GB storage and 50 GB data transfer, with default settings in the US East (N. Virginia) Region is as shown in the table below. This includes estimated charges for Amazon API Gateway, AWS Lambda, Amazon CloudFront, and Amazon S3 storage

AWS Service Total Cost

Amazon API Gateway $3.50

AWS Lambda $3.10

Amazon CloudFront $6.00

Amazon S3 $0.23

ta; id clicked architecture not clicking the overview was a page

Anyone experienced * module.elasticache_redis.module.label.data.null_data_source.tags_as_list_of_maps: data.null_data_source.tags_as_list_of_maps: value of 'count' cannot be computed recently starting

I ran the module a couple of weeks ago without an issue. Counts from beyond the grave

Hi there, I've traced down a problem from one of your other modules to the way that tags are consumed when you use interpolated values in tags. I'm fairly sure that it's a terraform pro…

is this only an issue because the enabled flag sets a count on a resource thats only ever 1 but terraform thinks it has an enumerable to work with that it cant

the answer to that is no lol its in the label code

tbh the elasticache module only needs a tags + id input; the additional dependency on label seems overkill; more injection > less dependencies

Dependency on labels is central to our entire terraform strategy. It ensures composability of modules and consistency. Humans are just not good, nor consistent about naming things. If we are not consistent about it’s usage then we would be breaking that contract. :-)

aye i mean that if it only needs id + tags then module.label.id module.label.tags to set the variable input seems less fussy.

of course, the dns parts of debatable use once you enable tls as the tls isnt configurable

2019-04-10

Hey all, I’m stuck in the world of IAM and I had a thought about permissions management. It’s nice to split permissions into a user-role structure for management and auditing, but the way role assumption is done feels awkward, and users have to “know” what roles they have available to them. Does AWS provide a method to find out all the assumable roles for a given user?

Not natively that I’ve run in to. At a previous gig we used OneLogin I think it was, and the roles you had access to were based on groups from our corp AD, and you saw a list of them when you signed in. That’s the closest I’ve seen, and far from a AWS-based solution. I’d love to be wrong though, but my guess is you’d have to engineer something to provide that info.

Cool, thanks for the info Alex. I thought as much because of the somewhat arbitrary way role assumption permissions are granted. If using AWS account federation I feel it could be automated with some API calls to look for sts:AssumeRole policies & wondered if it had been done before - probably different for AD and SAML etc. Cheers for the response!

is the fargate cli the closest thing in awsland to https://cloud.google.com/run/?

Run stateless HTTP containers on a fully managed environment or in your own GKE cluster.

Probably

btw, there are (2) clis for AWS

Also, they’ve started developing this one again: https://github.com/jpignata/fargate

CLI for AWS Fargate. Contribute to jpignata/fargate development by creating an account on GitHub.

new maintainer

Does anyone knows how RDS encryption storage works with KMS key that have rotation enabled?

Learn about automatic and manual rotation of your customer managed customer master keys.

2019-04-16

This just popped in to my email: https://aws.amazon.com/app-mesh/

I’m wondering if this could also integrate with say GKE for multi-cloud application networking. I also wonder how that integrates with EKS, since I’ve seen envoy in use primarily as a app mesh for K8S

AWS App Mesh is a service mesh that allows you to easily monitor and control communications across services.

App-mesh an AWS version of istio. Look for Istio service on GKE

AWS App Mesh is a service mesh that allows you to easily monitor and control communications across services.

anyone know if we can use different encryption keys for a single database storage in an RDS instance? (multi-tentant rds instance)

interested to know this as well

Kubernetes external secrets. Contribute to godaddy/kubernetes-external-secrets development by creating an account on GitHub.

Nice project, but the problem is that AWS Secrets is quite expensive: https://aws.amazon.com/secrets-manager/pricing/. Using chamber with AWS Systems Manager Parameter Store hasn’t praticaly any costs https://aws.amazon.com/systems-manager/pricing/

There is no additional charge for AWS Systems Manager. You only pay for AWS resources created or aggregated by AWS Systems Manager.

I think @mumoshu was first with his operator https://github.com/mumoshu/aws-secret-operator

A Kubernetes operator that automatically creates and updates Kubernetes secrets according to what are stored in AWS Secrets Manager. - mumoshu/aws-secret-operator

$0.40 per secret per month jfc, didn’t realise it was that much.

Yea it’s odd that they charge so much for it. Don’t get it.

2019-04-17

@mumoshu has joined the channel

Does anyone know of a good way to tag shared resources for billing reporting/monitoring purposes? For example, if I have an ALB that’s in front of two web apps - W1 and W2, can I have a billing report that includes 1/2 of the ALB cost with W1 and the rest with W2?

I’m FinOps, trust me, you can’t do that

we could try with a lot of custom but It will not be relevant

you can spread your cost by tags, but the scope will be the whole ALB, not only a part

Thanks

@Igor are you using Kubernetes by anychance?

No, not using Kubernetes

2019-04-18

hey all, I am stuck at AWS code build with ruby framework, seeking help on this

post what you’re stuck on - someone might be able to help

there must be issue with the buildspec.yml file or image

i tried with many other option as well

everytime i get different error

exit status is 127

It means command not found

its like on of the error

see above line, doesn’t have sudo command

does AWS code build provide docker image with mysql installed on this?

I attended a security webinar from AWS. here are my notes

Notes from AWS’ Security Strategies webinar by Tim Rains - osulli/security-strategies

@xluffy do you have any buildspec.yml file for Ruby to use on AWS code pipeline.

here is the latest error i am getting

see error, this is another error, in this container, doesn’t any JS runtime (u need a container with js runtime)

No js installed or avlb in PATH

provided AWS docker image doesn’t have it , i tried installing it but no success, also AWS code build has only single image for Ruby

2019-04-23

Hello, anyone using ec2 spot fleet plugin with jenkins?

2019-04-25

can someone help me one this.

Just in case anyone find it useful.

AWS Management Console down :warning: without region #aws #outage :aws: (https://status.aws.amazon.com/) • (link: https://us-west-2.console.aws.amazon.com/console) us-west-2.console.aws.amazon.com/console works :github-check-mark: • (link: https://us-east-2.console.aws.amazon.com/console) us-east-2.console.aws.amazon.com/console works :github-check-mark: • (link: https://us-east-1.console.aws.amazon.com/console) us-east-1.console.aws.amazon.com/console does NOT work :negative_squared_cross_mark: (link: https://console.aws.amazon.com/console/home) console.aws.amazon.com/console/home :disappointed:

So you can basically by-pass the error specifying the console region in the access url.

To hit a specific service in us-east-1 you can use the service URL, eg: https://us-east-1.console.aws.amazon.com/ec2/v2/home?region=us-east-1#Home:

2019-04-29

When you accidentally spin up a k8 cluster using T instances and the cpu-burst wipes out leaving 1 node running at ~20% of cpu

All kinds of awesome: https://infrastructure.aws/

The AWS Global infrastructure is built around Regions and Availability Zones (AZs). AWS Regions provide multiple, physically separated and isolated Availability Zones which are connected with low latency, high throughput, and highly redundant networking.

Is there a way to get objects from AWS S3 private bucket using cloudfront without presigned URL’s ?

2019-04-30

Yup, you can use an Origin Access Identity on the CloudFront distribution which has access to the S3 bucket via a policy. If you need your own auth at the CDN level you can implement it with a small Lambda @ Edge too. :)

@Lee Skillen thanks for answering my question

what would be advantage of using Origin Access Identity over presigned URL/cookies ?

It’s transparent to those using the CDN for a start, and it means you don’t need to generate presigned URLs upfront. The downside is that you might still be interested in protecting the content, so need to think about auth in a different way.

What kind of content is it?

it is all media files

Do you need auth? What if someone obtains a URL and distributes it to others?

if all content of the bucket is not a secret, then having private or public bucket does not make any difference since your users will see all the files via CloudFront. With a private bucket, use origin access identity as @Lee Skillen mentioned

Terraform module to easily provision CloudFront CDN backed by an S3 origin - cloudposse/terraform-aws-cloudfront-s3-cdn

we want to make the media files private, so planning to use private bucket

Some small difference is that a public bucket can be listed but not necessarily true with CloudFront. It may make a difference if you don’t care if files are public, but also don’t want easy access to all of the other files. It wouldn’t make sense for me, but I have seen people do this with URLs that are impossible to guess upfront (e.g. with randomised prefixes or something else in the URL). But if you go to that extent, I would just throw an auth method in there via a Lambda @ Edge. :)

I have seen people do this with URLs that are impossible to guess upfront

that’s security by obscurity bad idea, never works

Depends on your goal, but I broadly agree :)

i haven’t used cloudfront + Lambda Edge, did you use any auth method with Lambda Edge ?

Is there a way to automatically update Amazon AMIs on a launch template to the latest, instead of having to rerun terraform against it on monthly basis?

@rohit It depends how fancy you want to get. How are users authenticated before they access the CDN? I assume you wouldn’t want them to have to pass a username/password via basic auth if they were already authed before? In fact, are they authed at all or “anonymous”? Is the CDN on a subdomain of your main app website (if any)? You said media before, is it for static assets, downloads or streaming? Lots of questions and possibilities. :)