#aws (2019-12)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Archive: https://archive.sweetops.com/aws/

2019-12-01

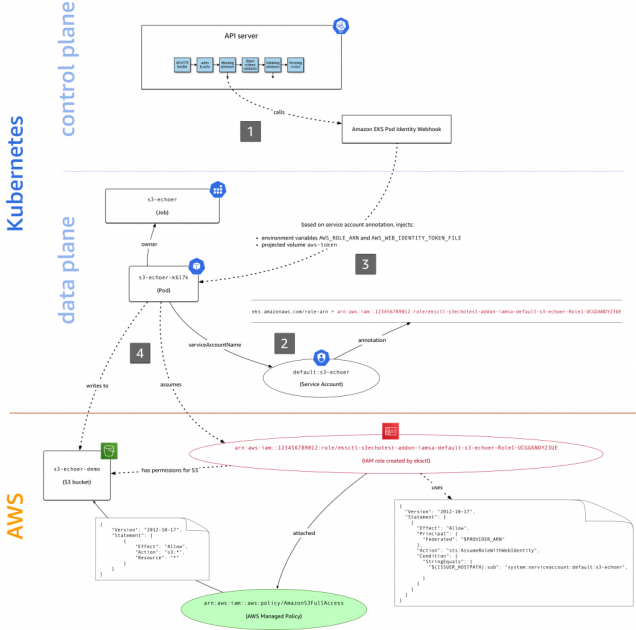

I am following https://aws.amazon.com/blogs/opensource/introducing-fine-grained-iam-roles-service-accounts/ to setup IAM roles for my pods. While trying to execute the command

aws sts assume-role-with-web-identity \

--role-arn $AWS_ROLE_ARN \

--role-session-name mh9test \

--web-identity-token file://$AWS_WEB_IDENTITY_TOKEN_FILE \

--duration-seconds 1000 > /tmp/irp-cred.txt

I am getting the error :

An error occurred (AccessDenied) when calling the AssumeRoleWithWebIdentity operation: Not authorized to perform sts:AssumeRoleWithWebIdentity

What am I missing ?

Here at AWS we focus first and foremost on customer needs. In the context of access control in Amazon EKS, you asked in issue #23 of our public container roadmap for fine-grained IAM roles in EKS. To address this need, the community came up with a number of open source solutions, such as kube2iam, kiam, […]

I figured this out .. I wasn’t setting the namespace (non-default) in the assume-role-policy json

Anyone at reinvent this week?

2019-12-02

Hi, my company I’ve just open-sourced a tool I’ve written. I didn’t know any other which is as accurate and faster. Hope it will be useful for some : https://github.com/claranet/aws-inventory-graph

Explore your AWS platform with, Dgraph, a graph database. - claranet/aws-inventory-graph

Is this similar to https://cloudcraft.co/?

Visualize your AWS environment as isometric architecture diagrams. Snap together blocks for EC2s, ELBs, RDS and more. Connect your live AWS environment.

not exactly, the aim is not to output a schema, but explore an architecture in all ways we want

for example, you can easily spot all instances which are opened to the world

see what instances have access to a specific database

Ah, cool. Nice to query.

yes, you query in a GraphQL language

it’s really fast (<5s for 500 ressources, with listing + import)

I’ll check it out.

thanks

Hello AWS friends. I’m trying to create a aws_rds_cluster and for the life of me I can’t find a match for engine, and engine_version, and cluster_family (and probably instance_type while I’m at it. I want mysql (or aurora-mysql). I think I’d prefer 5.7 but I’d be happy with 5.6. I keep getting the error

InvalidParameterCombination: RDS does not support creating a DB instance with the following combination

I’ve look over so many “documentation” pages but nowhere can I find a chart that tells me what is compatible with what.

Ok, so in a stroke of genius (or more likely dumb luck) – I found my answer: use the aws cli to tell me ~~ I had no idea. First get the possible engine combinations:

aws rds describe-db-engine-versions | jq '.DBEngineVersions[] | ([.Engine , .EngineVersion, .DBParameterGroupFamily])' -c

["aurora-mysql","5.7.12","aurora-mysql5.7"]

["aurora-mysql","5.7.mysql_aurora.2.03.2","aurora-mysql5.7"]

["aurora-mysql","5.7.mysql_aurora.2.03.3","aurora-mysql5.7"]

["aurora-mysql","5.7.mysql_aurora.2.03.4","aurora-mysql5.7"]

...many rows omitted

then choosing the possible instance combinations:

aws rds describe-orderable-db-instance-options --engine aurora-mysql --engine-version 5.7.12 | jq '.OrderableDBInstanceOptions[] | ([.Engine, .EngineVersion, .DBInstanceClass])' -c

["aurora-mysql","5.7.12","db.r3.2xlarge"]

["aurora-mysql","5.7.12","db.r3.4xlarge"]

["aurora-mysql","5.7.12","db.r3.8xlarge"]

["aurora-mysql","5.7.12","db.r3.large"]

...many rows omitted

AND IT WORKED. Yey. Thx.

2019-12-03

anyone using django-amazon-ses with SES domain configured in another account?

(i know it’s not aws specific but couldn’t find a better place to ask)

2019-12-04

Hi Guys I have a question about EBS snapshot: in our case we gonna take snapshot of 3 volume which is 100GB. snapshot gonna take daily, expected change volume is 2% per day as 2gb. Retention not couted yet. so total cost just for EBS snapshot is around:

Total snapshots: 30 Initial snapshot cost: 100 GB x 0.0500000000 = 5 USD Monthly cost of each snapshot: 2 GB x 0.0500000000 USD = 0.1 USD Discount for partial storage month: 0.1 GB x 50% = 0.05 USD Incremental snapshot cost: 0.05 USD x 30 = 1.5 USD Total snapshot cost: 5 USD + 1.5 USD = 6.5 USD 6.50 USD x 3.00 instance months = 19.50 USD (total EBS snapshot cost)

So anyway this ebs snapshot can be reduced ? ( retention policy on snapshot ) And if the snapshots taken remained to the next month, will the cost stay the same from the above or it will increase somehow

2019-12-05

Hi guys, some one here was migrate ec2 containers to ECS ??

What is your questiob @Rudyard?

I have one environment with some microservices running in EC2 but I want to migrate to AWS ECS with the same ECS, it’s that possible?

I take it you are not wanting to go to Fargate then?

You could install the ECS agent on the currently running instances and have them talk to ECS, but I’d likely stand up a new cluster with the agents on and cut over traffic once you are happy with it

thanks @joshmyers

2019-12-06

I login to an aws account through okta. It is read only. There is an iam role id like to assume in the same account which would let me approve codepipeline. Is this possible?

The trust policy princial for the iam role is set to the account number of the said read only account

but I get the following error when trying to assume the role

Could not switch roles using the provided information. Please check your settings and try again. If you continue to have problems, contact your administrator.

any ideas/tips?

AssumeRole is not a read only action. Probably need to modify the read only role with a policy that allows you to assume the role you want

2019-12-09

I have configured a VPC PrivateLink back to an ASG that acts as a TCP Proxy to RDS due to CIDR collision across VPCs. I understand this is less than ideal but the overhead from this proxy is staggeringly slower than hitting the RDS LB intra-VPC. Assuming that at present I cannot use VPC Peering is there anybody that might have a better architecture for hitting RDS across these VPCs with less overhead?

can you use an NLB with an IP-based target group, using the RDS IPs?

basically this article, but repurposed for privatelink instead of public access… https://www.mydatahack.com/how-to-make-rds-in-private-subnet-accessible-from-the-internet/

I forgot to mention this is Aurora

we’re using aurora also, looks like there is still an IP for the cluster. i’d think the NLB option would still work…

True. The scaling should not matter in this case

Thanks. I will give it a shot

good luck, cool use case! would appreciate pinging the thread with how it goes!

I will try to update the thread with performance comparisons after setting it up

Bogged down with some Istio stuff so it will be delayed.

Just to follow up on this. We decided to revamp our infrastructure to make this a moot point so I did not end up testing this configuration

2019-12-10

2019-12-11

Hi there.. I am a newbie for AWS system manager[SSM].. I am trying to invoke a lambda function through the automation document. The lambda function expects two input args which is supposed to be passed in as mandatory input parameters to the document while execution. I am trying to configure the lambda function as the only step in my automation document. I couldn’t figure out how to pass in the two input parameters[tagKey & tagValue] into my step.1 lambda function.. I couldn’t figure it out from AWS docs. Maybe too naive/dumb.. here is my current document

schemaVersion: '0.3'

parameters:

tagKey:

type: String

tagValue:

type: String

mainSteps:

- name: script

action: 'aws:invokeLambdaFunction'

inputs:

FunctionName: getInstances

I got it working now

schemaVersion: '0.3'

parameters:

tagKey:

type: String

tagValue:

type: String

mainSteps:

- name: InvokeLambda

action: 'aws:invokeLambdaFunction'

inputs:

FunctionName: getInstances

Payload: |-

{

"tagKey": "{{tagKey}}",

"tagValue": "{{tagValue}}"

}

2019-12-12

Anyone else just have a couple minutes of network issues in us-east-1

2019-12-16

Hey people. I’m confused by aws s3 permissions. I’m using an admin user and getting 403 when trying to upload a package to my bucket using REST.PUT.OBJECT when I use the go sdk

But succeed when using the python sdk (using awscli)

I enabled access logs for a bit and can see in the output the following:

47f98aca4f8daea37b80eff11d8c5665183bbe5982ba3468ee855c24541e5aa9 <bucket-name> [16/Dec/2019:16:56:03 +0000] 88.97.110.121 arn:aws:iam::<account-number>>:user/william 0D07E35C2A2D59AA REST.PUT.OBJECT pool/main/n/nodejs/nodejs_8.16.2-1nodesource1_amd64.deb "PUT /pool/main/n/nodejs/nodejs_8.16.2-1nodesource1_amd64.deb HTTP/1.1" 403 AccessDenied 243 - 3 - "-" "aws-sdk-go/1.13.31 (go1.12.6; linux; amd64)" - MPnRd23yUt/d7UE7rcQcthmcpQX1hazCQLjLW1aTjXXx44Fh1gIdDO1yGlWIgQ6UigqOmeBbt3I= SigV4 ECDHE-RSA-AES128-GCM-SHA256 AuthHeader s3.eu-west-2.amazonaws.com TLSv1.2

did you manage to capture and compare the success vs the failed log? …

for EFS storage on Amazon ECS tasks - rexray vs CloudStore vs any of the other things that popped up since 2017… rexray seems like a good bet, right?

Bear with me.. I last solved my needs in 2017 ( https://github.com/aws/amazon-ecs-agent/commit/14f9a2efc8bbf08c44fea0208ab32c2fc788ae4d ) but it has now come a time to finally renew this…

coerce ecs-agent to support EBS volumes via blocker volume plugin for docker Currently tested using this fork because it's stateless: https://github.com/monder/blocker Create a volume with …

ehm… but now that I look through things on the rexray side it too seems to be stale in some departments. NVMe support github issues are still open….

Can’t speak to any of the other solutions, but we’re still using EFS, and can’t really complain about anything (other than the read speeds probably not being super high). Though there will hopefully be a better future option than mounting the same volume on all the machines in the cluster just to have it be available to all of them

In the end I got CloudStor working quite well.

2019-12-18

Hello..I am trying to access AWS Secrets Manager in python code which is deployed as a pod to EKS. I haven’t enabled IRSA yet and the nodes have access to Secrets Manager, however the pod errors out saying unable to locate credentials. Why is boto not able to generate the temporary creds using the node role that the pod is deployed to? What am I missing please? Here’s my code :

session = boto3.session.Session()

client = session.client(service_name='secretsmanager',

region_name=os.environ["AWS_REGION"])

Can the boto client in the pod hit the metadata endpoint of the host ?

Yup..it can

oh.. i take that back.. I checked a direct curl from pod..let me check the client

@joshmyers: Your hint has been super helpful..I was able to find the role the pod sees. I had missed giving access to the node to view a particular set of secrets ..thank you

2019-12-19

I just released a new version, with a better look for lists and proxy availibilty : https://github.com/claranet/sshm/releases/tag/1.1.1

Easy connect on EC2 instances thanks to AWS System Manager Agent. Just use your ~/.aws/profile to easily select the instance you want to connect on - claranet/sshm

please cross post to #community-projects !

Easy connect on EC2 instances thanks to AWS System Manager Agent. Just use your ~/.aws/profile to easily select the instance you want to connect on - claranet/sshm

so it does not need a ssh key >?

Yes, what @maarten said

when I was reading the docs it say you need an ssh key on the instances

but maybe I’m wrong

?

You’re thinking of EC2 Instance Connect I believe. Whereas this uses Systems Manager Session Manager.

no

I’m talking about SSM

I use SSM session manager every day

webconsole since I do not need shell msot of the time

but I try to setup the proxy

using the ssm cli agen command

and it did not work

this :

host i-* mi-*

ProxyCommand sh -c "aws ssm start-session --target %h --document-name AWS-StartSSHSession --parameters 'portNumber=%p' --profile stage"

and that did not work

and in the docs it says you need a shared ssh key

and that is exactly what I don’t want

that is why we use Instance Connect for those cases

but this tool seems to not need the key ?

I’m very confused

@Issif

Oh sorry, I left this Slack and never seen your question.

Yes you don’t need an SSH key with SSM

np, I figure it out

the tool is useful for you? I quit my previous job and we don’t use SSM for now here, so I didn’t used it for a while now

very useful

I really like it

cool

thanks

2019-12-20

rds related question - for gp2 rds databases that have burstable iops, is the rds database supposed to be just as performant at 100% burst balance as it is at say 15% burst balance? Is it only once you get to 0% burst balance that your rds database gets essentially throttled?

correct, as long as you have credits you can burst, it’s no gradual process

Common way to have enough base iops available is by having a large volume size. 3 IOPS per GB provisioned.

thanks @maarten i thought so

hi everyone, I’m currently using CloudCheckr as an AWS cost reporting tool but I am not too happy with it. Does anyone have any recommendations for AWS cost reporting/management tools?

we used cloudcheckr

we endeup using cost explorer

with some custom reports

with all our accounts in AWS Organizations

in the “billing” org

@jose.amengual - what was the reason to drop cloudcheckr? (if you can share)

We’ve been using Metabase with Stitchdata to load the CSV into our own data warehouse

All your data. Where you want it. In minutes. Stitch is a cloud-first, developer-focused platform for rapidly moving data. Hundreds of data teams rely on Stitch to securely and reliably move their data from SaaS tools and databases into their data warehouses and data lakes.

The fastest, easiest way to share data and analytics inside your company. An open source Business Intelligence server you can install in 5 minutes that connects to MySQL, PostgreSQL, MongoDB and more! Anyone can use it to build charts, dashboards and nightly email reports.

but it requires a lot of SQL fu

(this is for cloudposse internal, not customers)

same as you, cost reports were not that clear, price, overcrowded UI, lack of API etc

@Erik Osterman (Cloud Posse) may skip that option

haha

the homegrown option

If I need to add a custom host mapping on an ECS Fargate container running in awsvpc networking mode, what’s the way to do it?

Do I need to modify /etc/hosts in the container on startup? Or is there a better way, maybe through Route 53?

You can modify /etc/hosts in your container entrypoint if you like.

No! It does not work. Because kubelet will reset all of your changes. Use hostalias instead https://kubernetes.io/docs/concepts/services-networking/add-entries-to-pod-etc-hosts-with-host-aliases/

(unfortunately extraHosts on the task definition doesn’t work with awsvpc mode)

2019-12-23

Hi guys! Have someone tried to send CloudWatch alarms to the Slack using sns+aws-chatbot?

Does it work?

CW Alarms > SNS> Chatbot > Slack works great however for me the RDS/ Redshift Cluster events to Chatbot does not work .