#docker (2018-11)

All things docker

Archive: https://archive.sweetops.com/docker/

2018-11-14

Hi People,

does anyone know a good and proper way to remove layers with secrets from a docker image ?

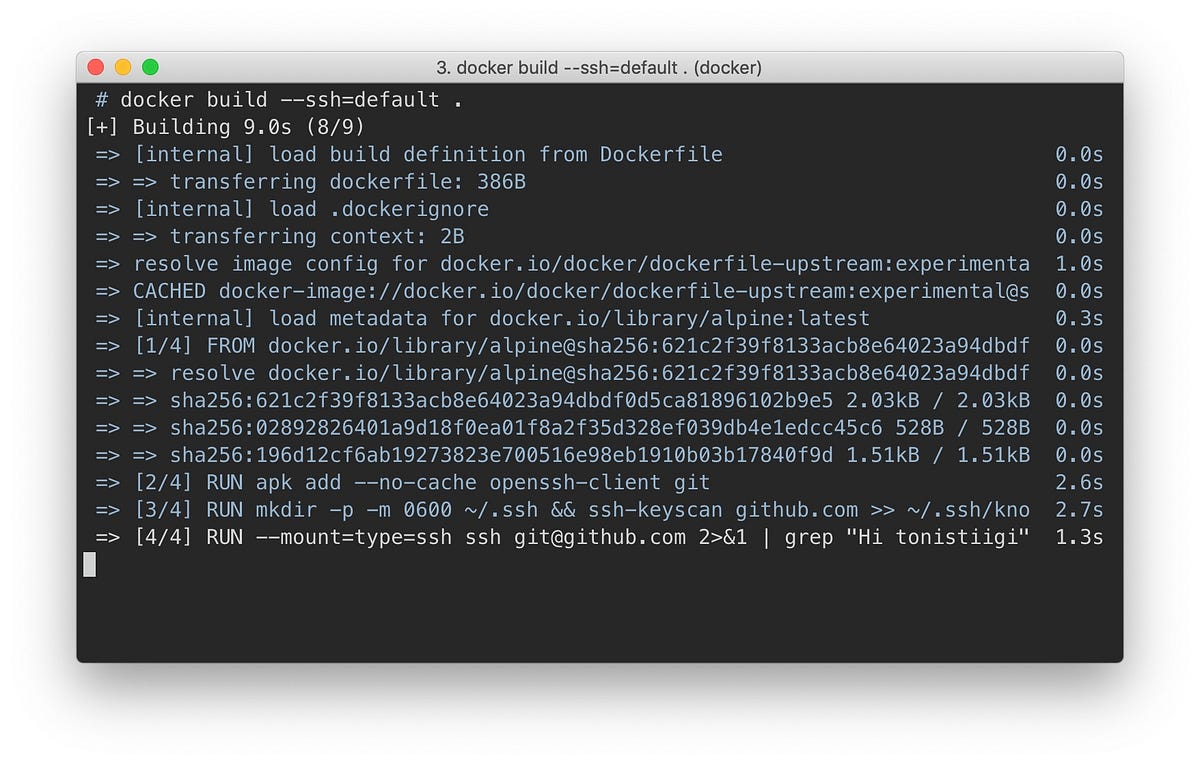

e.g. the only way that this can be done currently is to use multi stage builds or to unset the ENV/ARGS and there’s this blog post here that mentions experimental secrets but not for strings https://medium.com/@tonistiigi/build-secrets-and-ssh-forwarding-in-docker-18-09-ae8161d066

TL;DR docker history <docker_images> exposes layers with secrets from build time.

One of the complexities when using Dockerfiles has always been accessing private resources. If you need to access some private repository…

@Nikola Velkovski did you look at https://github.com/goldmann/docker-squash

Docker image squashing tool. Contribute to goldmann/docker-squash development by creating an account on GitHub.

here a a post how to do it https://blog.tomecek.net/post/docker-build-with-secrets/

Technical blog. Read about Linux, Python, Containers, Red Hat, Fedora, tools.

Nope, thanks will have a look.

I also found this https://docs.docker.com/develop/develop-images/build_enhancements/#new-docker-build-command-line-build-output which is more or less what the blog post is saying

Docker Build is one of the most used features of the Docker Engine - users ranging from developers, build teams, and release teams all use Docker Build. Docker Build enhancements…

I like this new output

Docker Build is one of the most used features of the Docker Engine - users ranging from developers, build teams, and release teams all use Docker Build. Docker Build enhancements…

i believe it’s also possible to squash an image without any thirdparty tools

docker build --squash

Ah yes we discuissed it with @maarten but when using --squash there will be no caching.

Ah, that’s true… let’s step back: why do you need the secrets in the image to begin with?

in this particular case it’s for ruby gems, i need to bundle them from a private repo so I need the api key from it

there are cases for npm etc…

Can you use build args? Those are not persisted in the layers unless the a build step writes it to disk.

Something like

FROM node:X

ARG NPM_TOKEN

RUN echo "//registry.npmjs.org/:_authToken=$NPM_TOKEN" > ~/.npmrc && \

npm install && \

rm ~/.npmrc

@Erik Osterman (Cloud Posse) I am using args but it turns out those layers also persist

yes, the layers persist, but that’s why you need to rm in the same layer

see the example above

need to chain with &&

Argh that’s why… ok great good to know!

| Description Build an image from a Dockerfile Usage docker image build [OPTIONS] PATH | URL | - Options Name, shorthand Default Description –add-host Add a custom host-to-IP mapping (host:ip) –build-arg… |

You can also have an internal proxy that you use in your build process

that proxy then has the authentication token for github

we wrote one for the docker registry to do this

so we could pull images without authentication in a trusted environment

same concept would apply to github

here’s the simple service

(you’d adapt something like this to proxy github requests instead)

DUPE: ~@Nikola Velkovski~heck this out~<https://medium.com/@tonistiigi/build-secrets-and-ssh-forwarding-in-docker-18-09-ae8161d066~

~Posted just a few days ago… highly relevant~

Ah yeah, I already read it and posted it yesterday

hahah

sorry, missed that! you did post it

Anyway thanks for the help, I was also under the impression that passing secrets as args is ok

luckily I haven’t pushed any public images

so regarding that experimental feature, that would be the cleanest, no?

yes

2018-11-25

A tool for exploring each layer in a docker image. Contribute to wagoodman/dive development by creating an account on GitHub.

2018-11-28

Has anyone used docker as a test environment for starting/stopping services using their init manager? I can’t figure out why none of the “your-favorite-OS-in-docker” images… support their standard init process / service manager.

None of the vendor images (amazon 1 or 2, ubuntu) include their init system: (upstart, systemd, sysvinit) [ to their credit, CentOS does includes instructions on how to create your own “Dockerfile for systemd base image” on their dockerhub readme: https://hub.docker.com/_/centos/ ]

my concern is, that without such hoop jumping, native, installed packages that try to install/enable their /etc/init or systemd unit files or /etc/init.d files are going to behave differently than real systems running on the exact same OS.

not to mention the OSX support for running systemd is missing (it requires --cap-add=SYS_ADMIN, apparently only on OSX, to allow systemd to mount tmpfs)

cf. https://github.com/moby/moby/issues/30723

Hi, I did a bit of research around this and, event though it looks like there is a way to work around this problem, this is not applicable to Docker users running it on macOS. OS version: macOS Sie…

Actually it looks like I can (on OSX) at least get it to start without --cap-add and without --security-opt=seccomp:unconfined by using --tmpfs /tmp --tmpfs /run in the docker run command

What is the scoop on running systemd in a container? A couple of years ago I wrote an article on Running systemd with a docker-formatted Container. Sadly, two years later if you google docker systemd this is still the article people see — it’s time for an update. This is a follow-up for my last article. Everything you …

is the requirement to use systemd inside of docker or to use systemd to start containers (e.g. like in CoreOS)?

actually, i’m not quite clear on the problem statement.

goal I’m trying to work with is that packer treats a docker builder the same as an EC2 builder… assuming they both start with the same OS image…. that systems work as similarly as possible.

aha, i see

@catdevman does this sound familiar?

@catdevman was telling me yesterday that they use packer to build both their AMIs and docker images and using Amazon Linux as base for both.

thats where I’d like to be

Thanks Erik. That sounds close. @catdevman when you have a moment, I’m interested to hear your strategy for using packer to create both docker images and AMIs

fwiw, when we’ve needed init in containers we’ve used s6 or dumb-init

s6 - skarnet’s small supervision suite

The folks at Yelp are

2018-11-29

yeah @tamsky I can share some of that information… the biggest part is that your starting point has to be the same so for us we went with amazon linux and ubuntu. Only problem with this strategy is docker and assuming everything is root and doesn’t have sudo which means become for ansible needs to be variable and needs to know what type of build you are doing.

@tamsky I have made separate packer files for ami and docker but the ansible playbooks are the same just different variables passed in. if you want to know more we can do pm

Thanks @catdevman,

that lines up 100% with my starting points (amazonlinux:2, ubuntu:18.04)

and matches where I’m at with packer+ansible.

I’d be interested to share my docker bits and ansible “hacks” that I’m using to remove the differences between container and AMI.

maybe we should have a #ansible channel? @Erik Osterman (Cloud Posse)

@tamsky sure thing!

2018-11-30

Hello people! Any recommendations for a a docker centric CI that just builds and uploads images, and utilizes caching

because I am losing my s*** with travis ci

travis cannot into Docker

we use https://codefresh.io/

ahha I knew it !

all pipeline steps are containers

soo I was checking out the pricing, and 3 concurent builds which is the pro subscription won’t do

any idea how will it cost to have let’s say 20 concurent builds ?

you need to discuss that with @Erik Osterman (Cloud Posse), he can provide more info and connect you with the Codefresh guys

(Y)

Thanks

one example how we use our Docker images in Codefresh pipelines https://github.com/cloudposse/github-status-updater#integrating-with-codefresh-cicd-pipelines

Command line utility for updating GitHub commit statuses and enabling required status checks for pull requests - cloudposse/github-status-updater

thanks @Andriy Knysh (Cloud Posse)

set the channel topic:

I just found this today: https://github.com/just-containers/s6-overlay interesting approach vs trying to support multiple flavors of init(1) within docker: sysv, upstart, systemd

s6 overlay for containers (includes execline, s6-linux-utils & a custom init) - just-containers/s6-overlay

That’s cool!