#security (2020-11)

Archive: https://archive.sweetops.com/security/

2020-11-03

2020-10-16 Github requested the additional 14 day grace period, with the hope of disabling the vulnerable commands after 2020-10-19.

2020-10-16 Project Zero grants grace period, new disclosure date is 2020-11-02.

2020-10-28 Project Zero reaches out, noting the deadline expires next week. No response is received.

2020-10-30 Due to no response and the deadline closing in, Project Zero reaches out to other informal Github contacts. The response is that the issue is considered fixed and that we are clear to go public on 2020-11-02 as planned.

2020-11-01 Github responds and mentions that they won't be disabling the vulnerable commands by 2020-11-02. They request an additional 48 hours, not to fix the issue, but to notify customers and determine a "hard date" at some point in the future.

2020-11-02 Project Zero responds that there is no option to further extend the deadline as this is day 104 (90 days + 14 day grace extension) and that the disclosure will be today.

Not sure how severe this is, but the timeline is pretty crazy

I recall seeing some deprecation notices of set-env

I know we still need to make some changes

Huh. When I reported a high vulnerability for GitHub Actions it was fixed in a couple days.

Interesting to see such a different response and timeline now

Weird.. the API is not working intermittently

2020-11-05

2020-11-06

I have a question ISO27001 related. I’m helping a partner with a customer who needs to have ISO27001 compliance. They are developing Lambda’s and DynamoDB. The question is about ‘Encryption at Rest’ of data in a cloud environment; is DynamoDB with KMS sufficient, or would it be important to add client encryption as well ?

AFAIK - DynamoDB with KMS should be sufficient.

The scp command, which uses the SSH protocol to copy files between machines, is deeply wired into the fingers of many Linux users and developers — doubly so for those of us who still think of it as a more secure replacement for rcp. Many users may be surprised to learn, though, that the resemblance to rcp goes beyond the name; much of the underlying protocol is the same as well. That protocol is showing its age, and the OpenSSH community has considered it deprecated for a while. Replacing scp in a way that keeps users happy may not be an easy task, though.

2020-11-08

FBI blames intrusions on improperly configured SonarQube source code management tools.

2020-11-09

Not sure which channel this belongs to - https://github.com/lyft/cartography It looks interesting from the diagram, but I am not sure how easy and helpful it is for infras smaller than lyft has

Cartography is a Python tool that consolidates infrastructure assets and the relationships between them in an intuitive graph view powered by a Neo4j database. - lyft/cartography

Funny, I developed my own: https://github.com/claranet/aws-inventory-graph

Explore your AWS platform with, Dgraph, a graph database. - claranet/aws-inventory-graph

Interesting, I wonder how easy is it to write meaningful queries? (I am not familiar with graph databases myself and the examples I see in README looks rather easy to make a mistake there. Scary syntax )

It a mental exercise, I agree, it tooks me some time to figure out how to deal with, but after some errors it becomes pretty much convenient and I was to retrieve all informations I needed

2020-11-17

If anyone’s interested, here’s how the Platform One program under the Air Force does automated OpenSCAP scanning of containers: https://repo1.dsop.io/dsop/jenkins-shared-library/-/blob/development/vars/dccscrPipeline.groovy#L194

Ah yes

/*

QUESTION:

Whether 'tis nobler in the mind to suffer

The slings and arrows of outrageously oudated gudidence from our RPM distribution

Or to take Arms against a url scheme we don't control

and can only access from a connected environment

*/

I now know that I need a person with a literature passion as my next hire to leave such gems in my codebase

2020-11-20

my website that uses cert-manager letsencrypt for tls is sometimes showing an invalid (expired) certificate in incognito. The issue is this k8s cluster is new (hours old) but it’s sometimes showing a cert that was from 2 years ago. This cert is not on my cluster at all. Anyone run into this extremely weird case before?

My guess is that’s not an actual let’s encrypt cert

More like some default that’s preinstalled before the real one

Also, be very careful about using let’s encrypt with ephemeral clusters like you do

Certificates can only be reissued something like 5 times a week. It’s a hard limit and when you hit it, all you can do is wait. Can’t pay or request and upgrades of the limit.

@Erik Osterman (Cloud Posse) I actually hit that rate limit for the first time yesterday while debugging my issue Whats worse is I don’t store those tls secrets remotely after they get created and they’re destroyed w/ the clusters that get destroyed. Thankfully I had not deleted the last tls-secret for the cert still on the new cluster right before I started hitting the rate limit or I would’ve seriously been in a pickle. I feel sick just thinking about being in that situation and I’m definitely prioritizing also using Azure managed certs to avoid that ever happening.

I honestly thought the rate limit was like 50 certs in a week. I did not know it was so low ( 5 a week)

Phew glad you dodged the bullet on that one

What’s the benefit of azure managed certs over ACM?

@Erik Osterman (Cloud Posse) i can’t use ACM with my AKS clusters

Ah, didn’t even know you had stuff on #azure

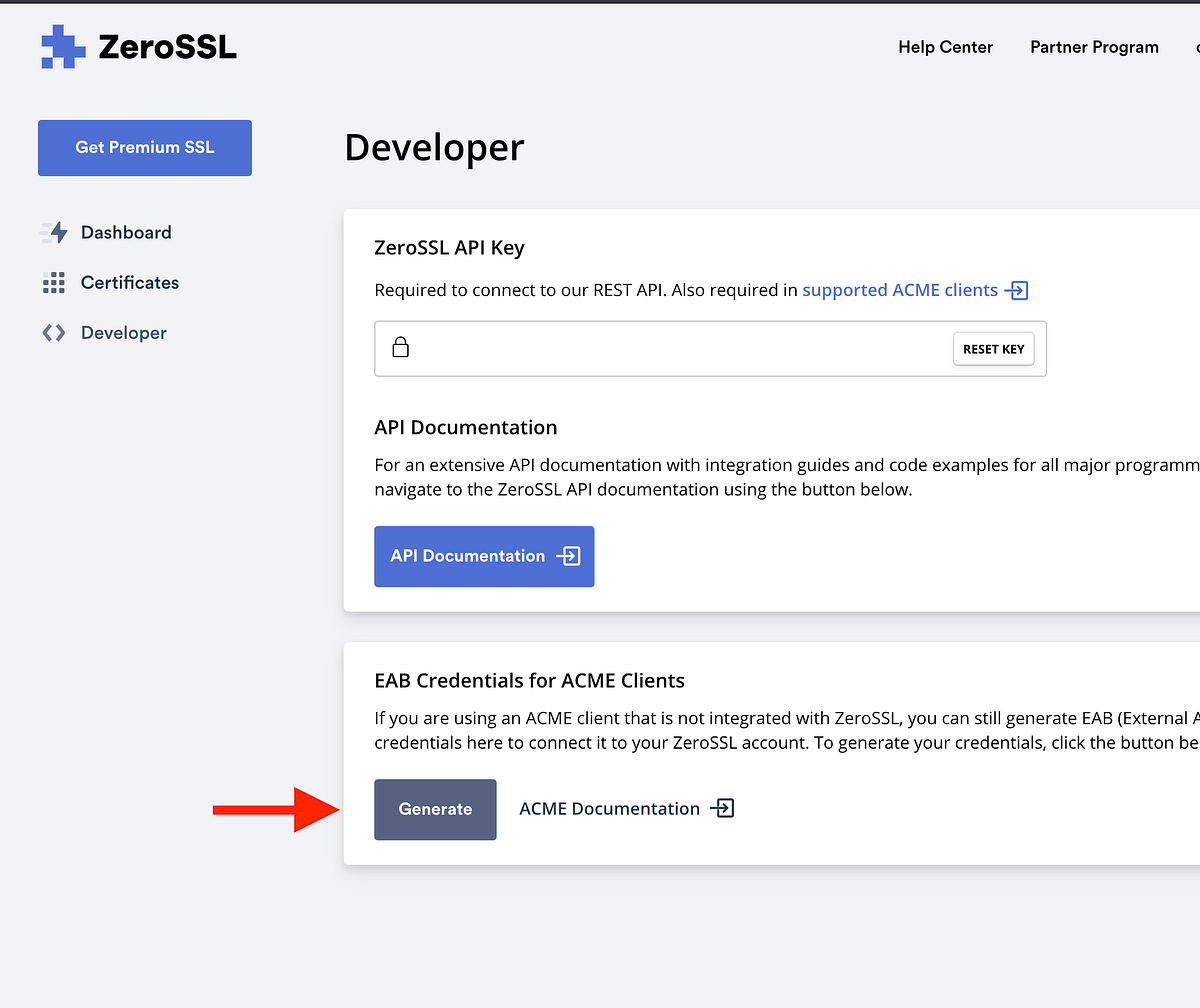

This just announced today on Hacker News: https://zerossl.com/pricing

Pricing for ZeroSSL, a free provider of 90-day and 1-year SSL certificates with Wildcards, SSL monitoring, ACME clients, a dedicated Certbot and REST API.

It speaks ACME protocol

You can pay a nominal amount for unlimited 90 day certs

interesting, would be interested in using it for in-cluster tls

since its ACME, we could technically configure cert-manager to use zerossl instead of letsencrypt?

Disclaimer; I love LetsEncrypt. Like, I really love it. It’s opened up SSL to the world and we’re better off as a result. But sometimes…

Yup, exaclty

(side rant: and for the record, zeroSSL is a horrible brand name for a company that is totally about SSL. )

like an ecommerce site branding itself zeroDEALS, or like datadog rebranding to zeroVISIBILITY!

haha maybe it means

this is so cheap (relatively for our company) and so worth not worrying about letsencrypt rate limits at $10/mo or even $50/mo

agree about paying a measly $10/mo to eliminate a class of errors