#aws (2020-01)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Discussion related to Amazon Web Services (AWS)

Archive: https://archive.sweetops.com/aws/

2020-01-02

Does anyone know how to make an internal ALB with private hosted zone use DNS cert validation ?

also, for clarification, you want a public certificate for a private zone?

(because I think private certificates address this - https://docs.aws.amazon.com/acm/latest/userguide/gs-acm-request-private.html)

Request an ACM PCA private certificate.

cheaper to buy a domain with route53 and use public certs with a public zone than ACM Private CA

Definitely cheaper

we are using route53 private hosted zone, create a cname and attach internal alb endpoint to it

can do the same with a public zone instead. very easy. to answer the question, i think DNS cert validation will require a public zone, since the service needs to be able to perform a DNS lookup to validate the records ¯_(ツ)_/¯

that’s what i thought, was checking if it was possible to do public DNS with my current setup

the service does not have network access to your private zone to perform the DNS validation, i don’t think. not sure how that would work, would be some black magic involved…

does the public DNS need to resolve to something ?

ACM will provide you with the DNS records that you need to enter. you are proving that you own the zone associated with the ACM cert request. so the zone (or zones, can be plural) needs to match all the cert names you provide to ACM

if you can’t get there, then the ACM PCA feature that @Erik Osterman (Cloud Posse) linked ought to work. it’s just expensive to run an ACM PCA ($400/month for the PCA, plus a charge per issued cert)

@loren thanks. I will check it out

When using nginx-ingress (installed via helmfile provider), is there a good way to get the load balancer CNAME into terraform for use with populating dns (e.g. [a92da7dd42d9c11ea9d19028a775bee0-865849920.us-east-2.elb.amazonaws.com](http://a92da7dd42d9c11ea9d19028a775bee0-865849920.us-east-2.elb.amazonaws.com))

Do not use terraform for that. Instead use the external-dns controller.

This is where the power of Kubernetes controllers really shines

E.g. use the cert-manager controller to automatically generate certificates

oh yea good point, I was getting confused (I do use external-dns and cert-manager). What I actually was driving at was gcp lets you assign a static ip to a load-balancer which can be passed in as a variable to helmfile and remain constant if for some reason the cluster had to be recreated (useful if passing this ip to a third party domain you don’t control). On AWS, I don’t think there’s an ability to control the CNAME so it will change between cluster creations, is that right?

On AWS to get a static IP and attach it to load balancer, you can use https://aws.amazon.com/global-accelerator/

thanks @Andriy Knysh (Cloud Posse) I’ll give that a shot.

hmm, so global accelerator gives multiple static ip addresses, but looks like nginx-ingress is only set up for one load-balancer ip address…. though I guess just choosing one might work with some loss of HA.

https://github.com/helm/charts/blob/master/stable/nginx-ingress/values.yaml#L243

Curated applications for Kubernetes. Contribute to helm/charts development by creating an account on GitHub.

Does anyone here use RDS IAM authentication feature ?

I am trying to figure out if we would still have to generate token and use it in place of password when RDS IAM authentication is enabled

If my app is running on ec2, can i just attach iam policy and can get rid of using password as a whole ?

The documentation also says that the “The authentication token has a limited lifetime of 15 mins”, does that mean once the token is generated we have to use it with in 15 mins to make a connection

yes you app will have to use the mariadb driver to deal with the token expiry

so it will need to request a token every 15 min

once a connection is made to the database, why does the app have to make connection every 15 mins using a new token ?

because the token expires

is like the password was changed

the doc detail how to do it with some code examples

and once you are using mariadb driver you can just delete all the other user not using the driver

is a specific mysql driver that get installed in the mysql aurora server

and you create the user in a specific way

2020-01-03

I have an ACM question, are the following certs the same? (I’m trying to understand what ‘additional names’ are for) cert a:

domain name: test.domain.com

additional name: *.test.domain.com

cert b:

domain name: *.test.domain.com

additional name: -

cert b will not cover [test.domain.com](http://test.domain.com)

just all subdomains

so for example, cert b will not cover test.domain.com but aaa.test.domain.com ?

Yes

so its more ideal to go with cert a?

essentially cert a will cover [test.domain.com](http://test.domain.com) and all subdomains?

yes, create it exactly as cert a

since you use star for subdomains (all of them) and if you use DNS validation, ACM will generate two exactly similar records to put into DNS zone

and since the records are the same, you can add just one of them and all will work

but, if you request a cert for test.domain.com and just for www.test.domain.com, then ACM will generate two different records, and you will need to put both of them into DNS

2020-01-07

has anyone used Iam Roles for Service-Accounts with EKS and a cluster of just Managed Node Groups with the cluster-autoscaler (v1.14.7)?

The role has the required permissions and it is getting injected into autoscaler pod.

Aren’t the details (role Arn) added as annotations to the service account (metadata)? Away from computer (on leave)..

yes, I added it there. That gets picked up and then AWS have a mutating admission controller which injects the role and tokenfile to the pod.

we tested IAM roles for service accounts for EKS using managed Node Group https://github.com/cloudposse/terraform-aws-eks-node-group. Worked great. Maybe this will help:

Terraform module to provision an EKS Node Group. Contribute to cloudposse/terraform-aws-eks-node-group development by creating an account on GitHub.

Here is the Role for external-dns:

locals {

eks_cluster_identity_oidc_issuer = replace(data.aws_ssm_parameter.eks_cluster_identity_oidc_issuer_url.value, "https://", "")

}

module "label" {

source = "git::<https://github.com/cloudposse/terraform-null-label.git?ref=tags/0.16.0>"

namespace = var.namespace

name = var.name

stage = var.stage

delimiter = var.delimiter

attributes = compact(concat(var.attributes, list("external-dns")))

tags = var.tags

}

resource "aws_iam_role" "default" {

name = module.label.id

description = "Role that can be assumed by external-dns"

assume_role_policy = data.aws_iam_policy_document.assume_role.json

lifecycle {

create_before_destroy = true

}

}

data "aws_iam_policy_document" "assume_role" {

statement {

actions = [

"sts:AssumeRoleWithWebIdentity"

]

effect = "Allow"

principals {

type = "Federated"

identifiers = [format("arn:aws:iam::%s:oidc-provider/%s", var.aws_account_id, local.eks_cluster_identity_oidc_issuer)]

}

condition {

test = "StringEquals"

values = [format("system:serviceaccount:%s:%s", var.kubernetes_service_account_namespace, var.kubernetes_service_account_name)]

variable = format("%s:sub", local.eks_cluster_identity_oidc_issuer)

}

}

}

resource "aws_iam_role_policy_attachment" "default" {

role = aws_iam_role.default.name

policy_arn = aws_iam_policy.default.arn

lifecycle {

create_before_destroy = true

}

}

resource "aws_iam_policy" "default" {

name = module.label.id

description = "Grant permissions for external-dns"

policy = data.aws_iam_policy_document.default.json

}

and here is how external-dns service account was annotated with that Role: https://github.com/cloudposse/helmfiles/pull/207/files#diff-40daec15ea9ebdc2aed4f62abba406c3R63

what Add helmfile for EKS external-dns Activate RBAC Use Service Account for external-dns why Provision external-dns for EKS cluster (which is different from external-dns for kops) Use IAM Role …

after all of that was deployed and EKS started a few instances from Node Group, external-dns was able to assume the role and add records to Route53 for other services

thanks, I’ll give that a try to see what I have different

ugh.. needed to include fullnameOverride: "cluster-autoscaler" in helmfile - it autogenerates a different sa name, works now.

You may have already received an email or seen a console notification, but I don’t want you to be taken by surprise! Rotate Now If you are using Amazon Aurora, Amazon Relational Database Service (RDS), or Amazon DocumentDB and are taking advantage of SSL/TLS certificate validation when you connect to your database instances, you need […]

2020-01-08

Anyone know how I can skip text when using parse on Cloudwatch Insights? I have parse message '* src="*" dst="*" msg="*" note="*" user="*" devID="*" cat="*"*' as _, src, dst, msg, note, user, devID, cat, other but I’d like to discard everything before src=

apparently there’s | display field1, field2 that filters out what fields insights diplays

(and it works on ephemeral fields created by parse )

2020-01-09

I don’t see the Opt-In option for new ARN format in ECS

Has anyone come across that before?

I am getting “The new ARN and resource ID format must be enabled to add tags to the service. Opt in to the new format and try again.” from TF, but not sure how to resolve

Just hit this same thing this morning. Solved with: aws ecs put-account-setting-default --name serviceLongArnFormat --value enabled

Thanks

I’m getting below error. Is this the right forum to ask for help?

terraform init

Initializing modules...

Downloading cloudposse/ecs-container-definition/aws 0.21.0 for ecs-container-definition...

Error: Failed to download module

Could not download module "ecs-container-definition" (ecs.tf:106) source code

from

"<https://api.github.com/repos/cloudposse/terraform-aws-ecs-container-definition/tarball/0.21.0//*?archive=tar.gz>":

Error opening a gzip reader for

we have no control over those tarball URLs

that’s the correct one provided by github

perhaps share your terraform code?

Just started to get the same oddly

source = "terraform-aws-modules/autoscaling/aws"

version = "~> 3.0"

odd

in my case updating terraform from .16 to .19 seemed to correct it so maybe a blip or maybe something broke. Hard to tell

On a serious note, I wonder how GitHub feels about all the terraformers downloading tarballs of modules. Can you imagine the thousands and thousands of tarballs being requested per second!

Microsoft can afford the bandwidth

@Bernhard Lenz better to use #terraform

Tx

2020-01-10

Anyone have experience with how much overhead RDS postgres multi-az adds? I was doing some testing on tiny instances and the added replication seemed to add a decent amount (20-30% CPU) but that was a tiny t3.small instance

don’t use tiny instances.

We don’t, but I haven’t tried every instance type to see if that impact was only because it was so small or whether replication actually adds a decent bit of overhead

Smaller instance types of anything aren’t going to give you an accurate impact assessment. How about you try using the instance types you are currently using in production.

The point of the question was to see if someone’s already done this

I don’t have an answer, but it seems a common complaint, explained in more detail: https://stackoverflow.com/questions/47162231/rds-multi-az-bottlenecking-write-performance/50441734#50441734

We are using an RDS MySQL 5.6 instance (db.m3.2xlarge) on sa-east-1 region and during write intensive operations we are seeing (on CloudWatch) that both our Write Throughput and the Network Transmit

I suppose the amount of overhead would depend on many many factors: amount of data written, size of data written, randomness, io characteristics of the instance. The t3 class will probably be worst to use as a reference instance since they are burstable.

multi-AZ will always have (some) performance impact since Aurora does replication synchronously

Say I upload to files to an S3 bucket for a website multiple times per day: index.html and some_file.some_cache_busting_string.js, and that the new version of index.html that is uploaded will always reference the most recent js file.

index.html is a much smaller file than the js file, so when I upload these files the html file will typically complete its upload first.

If someone visits my site during the time after the HTML is uploaded, but before the js finishes uploading, the page will fail to load.

I’m guessing I’m not the first person to experience this, so how do you all handle this?

Do you have versioning enabled ?

AFAIK, it would serve old version of js file if replacing js file is not completed

sounds like a good puzzle to solve :slightly_smiling_face: could be done by uploading files to S3 sequentially or using this in index.html (in onerror, try to load the old file) https://javascript.info/onload-onerror

Hey team, am running a Hugo static site with the cloudposse CDN module with great results. Site is converted from a lamp stack minus the deprecated CMS. Looking to replace the phpmailer email form and came across this module. https://github.com/cloudposse/terraform-aws-ses-lambda-forwarder Wonder if I could stitch this and AWS API Gateway together to handle the form POST per a few blogs? Preferably in terraform but haven’t used these services before. Will need to find a way to avoid spam usage.

This is a terraform module that creates an email forwarder using a combination of AWS SES and Lambda running the aws-lambda-ses-forwarder NPM module. - cloudposse/terraform-aws-ses-lambda-forwarder

I would recommend a js embed to replace the forms

This is a terraform module that creates an email forwarder using a combination of AWS SES and Lambda running the aws-lambda-ses-forwarder NPM module. - cloudposse/terraform-aws-ses-lambda-forwarder

We use HubSpot

And outgrow

Thanks Erik, been looking at formtree but looks like plenty about. Indeed seems a lot simpler.

2020-01-11

2020-01-12

Hi guys! I faced issue with ALB’ hard limit that is 1000 Targets per ALB. I tried to get targets that are belong to ALB using aws cli, but I did not found such option there. Did someone face this issue or make work around for it?

2020-01-13

Hi guys, I have a question about EKS: You pay 0,20 USD per hour for each Amazon EKS cluster that you create (from https://aws.amazon.com/eks/pricing/) Is it apply for any type of eks confirmation (ec2, fargate ) ? Do you know why is it so expensive ? it is almost 144$/month. is it price for k8s service containers ?

That’s the price for ‘just’ the control plane. Not cheap. Otoh, setting up a HA control plane on EC2 will likely set you back for something similar or higher.

factor in the number hours that you’ll spend setting it up and keeping it running it’s inexpensive IMO

Maybe try EKS on Fargate, looks like you’re only charged for EKS as long as your pod is running

But yeah, that actually seems cheap to me too

does this (HA control plane on EC2) mean that I have to remove eks and deploy my own k8s into EC2s?

that being said, I don’t use EKS…my team is only running two containers (currently) so we’re using Fargate/ECS

That would be the alternative, yes (e.g. using Kops). But e.g. 3 m5.large instances will be as expensive. And you’ll not be able to use EKS managed nodes or EKS Fargate.

I’m using ECS as well, but I was starting to look into k8s, and I was unpleasantly surprised about price

@TBeijen thank you.

2020-01-14

Hi guys Have anyone experience with EMR auto scaling related to Presto app? We have errors in Presto when downscaling EMR, it looks like EMR does not support graceful shutdown for Presto because it is not managed by YARN. Is there any workaround? Are we doing something wrong?

Does anyone know where I can find an example of using terraform to add a trigger to a lambda function? I found aws_lambda_event_source_mapping but it seems to only support event streaming from Kinesis, DynamoDB, and SQS. I want to add a trigger for changes in an S3 object.

Manages a S3 Bucket Notification Configuration

Thanks Joe!!

No worries. How are you finding deploying lambda functions with Terraform?

Love deploying lambda with terraform. It’s phenomenal. Especially when using the claranet/terraform-aws-lambda module

I shall look into that, thanks @loren. Have been looking for alternatives to the Serverless framework.

If you use api gateway extensively, then terraform is rather more involved to get working than serverless. But for basic lambda and integrating with other aws services, terraform is way better. And you can do api gateway also, you just need to really learn some of the complexities where serverless makes a lot of choices for you to simplify the basic interface

That’s been my takeaway from using both

Thank you for that. Current project involves kinesis stream consumers so it could work well.

2020-01-15

Has anyone tried using the reference architecture with a .ai domain? You cannot register .ai domains in AWS. I am wondering if this would work if, after provisioning, the SOA record in the apex domain’s hosted zone were deleted, and name servers at the registrar pointed to the name servers in that same zone?

@Joe Hosteny haven’t tried… but don’t see why not? ref arch does not register TLD. it just creates the zones.

so if you can create the zones, then it’s fine.

after provisioning, the SOA record in the apex domain’s hosted zone were deleted, and name servers at the registrar pointed to the name servers in that same zone?

please share context (also lets use #geodesic)

office hours starting in 10m: https://cloudposse.com/office-hours

Public “Office Hours” with Cloud Posse

2020-01-17

Hey peeps

I am trying to add a config rule to Landing Zone so it can be created across accounts

There’s very few (if any) tutorials on LZ - all I see are videos and articles on why LZ is good and what it does and its advantages, but barely any meaningful examples

so my CodePipieline pipelines are passing after adding the custom config rule to some template

I have also added the lambda that should get invoked (both source code and definition in the template) as well as the permissions required to execute the lambda

After the pipeline executes successfully (which takes forever to complete), the resources are still not created and at this point, I am not sure what it is that I need to understand between cloudformation and LZ

It’s hard to troubleshoot when everything is passing

Any pointers to some resource which can help me understand LZ like a 5 year old?

@Mike Crowe any resources you found helpful?

@Erik Osterman (Cloud Posse) not really, just some assistance from a friend who explained the manifest file. Still learning the ropes - info seems scanty on it

Nice little extension from a coworker: https://addons.mozilla.org/en-US/firefox/addon/amazon-web-search/

Lets you create a shortcut to open up the AWS console service list / search bar - and most importantly lets you hit esc to close it

Download Amazon Web Search for Firefox. Hotkey for opening AWS search.

@Mike Crowe has joined the channel

2020-01-18

@Mike Crowe sound familiar?

Ha ha btdt

AWS NAT gateways are stupidly expensive for what they do

And not documented in control tower

2020-01-19

i have to think that they’re just spinning up multiple ec2 instances to do NAT or something like that - for the price that you pay it would have to be something supper inefficient

Was thinking about this the other day… at least modifying my terraform so that the “spoke” VPCs just get a solo NAT gateway instead of 3x… but then I guess you start paying cross-AZ for 2 thirds of egress traffic anyway so I dunno

2020-01-20

can also use transit gateway to route egress traffic through one vpc, and just pay for one set of nat gateways

EC2 instances doing NAT i think

2020-01-21

2020-01-22

hey folks, a client of mine is using the AWS SSO to manage all access creds. I’m having some trouble finding a nice solution for using this with terraform.

Currently I am logging into the aws sso page, choosing the aws account, clicking “Command line or programmatic access” and copy pasting the provided env vars into my shell.

I have to do this every hour

Both aws-vault and terraform have tickets open about AWS SSO, but has anyone come up with a decent workaround?

AWS recommends that we give access/secret keys only to physical users. Machines or systems should instead assume IAM roles (e.g. an EC2 instance should never use access keys passed as environment variables).

However, how does this best practice work in real life when the system that communicates with AWS is a third party one (e.g. GitHub actions). Can I make a GitHub Actions CI pipeline communicate with AWS APIs without creating an IAM user for it? After all, GitHub is a machine, not a human. It should use IAM roles, right?

AWS means to do that in AWS eco-system. If you have a custom github runner in aws you can use IAM role

So if I use a real third party solution like Jenkins, GitHub actions, CircleCI - I have no other option but to resort to IAM users and secret keys per provider?

Yes

Yup

did you try aws-cli v2, and its new integration with aws sso? login with that to get the creds for the profile, then run terraform? https://docs.aws.amazon.com/cli/latest/userguide/cli-configure-sso.html

If your organization uses AWS Single Sign-On (AWS SSO), your users can sign in to Active Directory, a built-in AWS SSO directory, or another iDP connected to AWS SSO and get mapped to an AWS Identity and Access Management (IAM) role that enables you to run AWS CLI commands. Regardless of which iDP you use, AWS SSO abstracts those distinctions away, and they all work with the AWS CLI as described below. For example, you can connect Microsoft Azure AD as described in the blog article

I ended up using aws cli v2 with a little wrapper https://github.com/linaro-its/aws2-wrap

Replaces aws-vault in my normal setup

Simple script to export current AWS SSO credentials or run a sub-process with them - linaro-its/aws2-wrap

Depending on your integration, one thing you can also consider is having some privileged task inside your infra deliver STS credentials to the third party service.

That means your infra code has to have creds to the service, but that may be more palatable

may be able to use the credential_process feature of the aws shared config file to retrieve temporary creds, if the integration is running in something that gives you that kind of access, https://docs.aws.amazon.com/cli/latest/userguide/cli-configure-sourcing-external.html

If you have a method to generate or look up credentials that isn’t directly supported by the AWS CLI, you can configure the CLI to use it by configuring the credential_process setting in the config file.

what is the best way to implement API authentication for REST API built in nodejs (not AWS Gateway) ?

Most people use passport.js

yeah i noticed that

Little background - our apps are deployed in AWS but the APIs are not deployed to AWS gateway

so all our apps need to talk to our APIs

Passport is the right choice for api authentication in node

currently we have these APIs sitting in private layer

what do you mean by private layer?

private subnet

how does your web app JS access them?

so the app is deployed in public subnet and it has access to the APIs sitting in private subnet

using security groups

I think you’re referring to server-to-server API calls?

yes

Passport is still fine. The passport-localapikey strategy is a simple option.

thanks for your suggestion

i would like to also understand how complex would it be to use AWS API gateway

With the new HTTP API feature (in beta though) it’s not too bad but your API will still need to generate JWT’s

If you use REST API you could require an API key on certain methods or use a custom authoriser or require a certain request header. There are lots of options. It just depends on your needs.

yeah i was looking at HTTP API

any ideas and thoughts are appreciated

2020-01-23

https://awsapichanges.info/ - not sure if anyone else posted it earlier

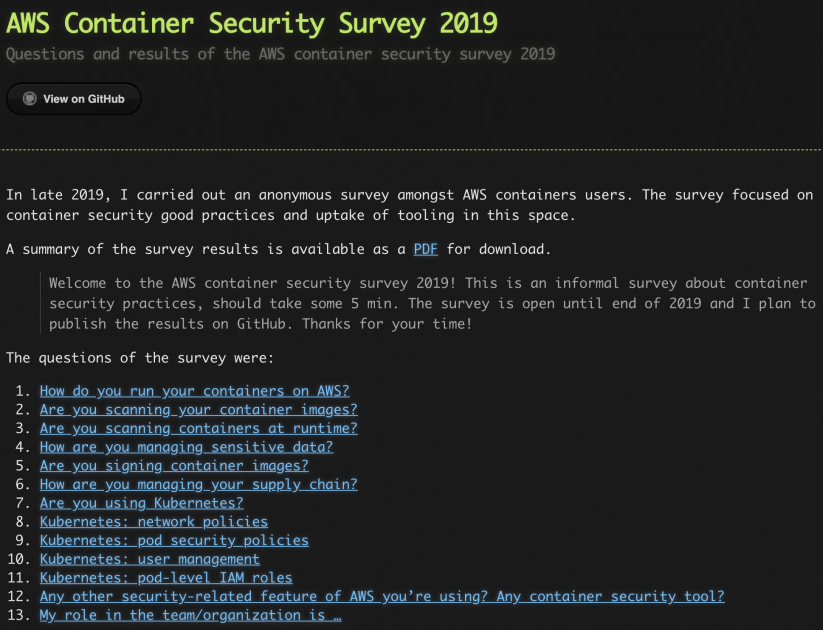

Security is a top priority in AWS, and in our service team we naturally focus on container security. In order to better assess where we stand, we conducted an anonymous survey in late 2019 amongst container users on AWS. Overall, we got 68 responses from a variety of roles, from ops folks and SREs to […]

am i nuts or is cloudwatch synthetics really really expensive

i’m calculating $73.44/month for a single per 1 minute check

Looks like it’s just really expensive. Checkout Example 10 on https://aws.amazon.com/cloudwatch/pricing/

That seems really uncompetitive. There are so many options in this space

for some of our micro services we’d be looking at spending 4x for checks than we do for running the service!

In general, cloudwatch is expensive.

Even metrics cost something like $0.30/metric

when you combine that with k8s, with has like 10,000 metrics, it’s easy to spend more on the monitoring than you would for a small cluster.

Yeah our Prometheus metrics in cloud watch would be like be 100k month.

@ $0.0017 per canary run

Just found an SES rule that was sending to an s3 bucket that used to exist, but no longer does. Is there any way to retrieve the emails it missed in the meantime?

depending on your aws support level you may try to ask aws uspport and maybe they will be able to help. I wouldn’t bet they are but there’s always a chance

2020-01-24

Has anybody run to issues with Internal DNS not resolving correctly for ECS Service Discovery? I can see the correct IP in Route53 with TTL of 60, but it’s not being picked up

You application doesn’t pick it up?

What is your zones SOA TTL?

My application doesn’t, but I also tried pinging it elsewhere, and it’s also giving me the wrong ip

SOA TTL is 900

So I would cut SOA to 30 just in general

Because that acts like a negative cache

So if the record is queried before it exists and the response is not found, that negative response will be cached for for SOA TTL

But what you are describing sounds like it could be something else.

AWS Cloud Map service is magically supposed to manage these mappings

Since you are getting an IP back but the wrong one, it doesn’t have to do with the SOA

First time I ran into an issue with it

I don’t have experience with that service so someone else here might know more like @maarten

Bah, just noticed Route53 operational issue alert in the AWS console

Been going on for over 2 hours too. SMH. Please tell me this almost never happens.

2020-01-27

#aws anyone have experience dealing with aws state machines? specifically, a missing state machine after a cloudformation (landing-zone) update, and my avm (vending machine) plays fail.

2020-01-28

hello, anyone using private aws gateway api with custom host header? Thanks

no but have you thought of using an ALB with lambda hooks instead ?

@Michel I think we may be doing that right now. We’re doing cross VPC and cross account access with a R53 private zone to a private ALB to a private API gateway. The ALB is required for cross account access otherwise the API Gateway could be shared cross VPC (same account) with just a VPC Endpoint.

2020-01-29

Since VPCs are limited in my environment, I naively thought I could just kops cluster create a new cluster inside my existing VPC. (with this snippet).

Then it blew up because of subnet conflicts and I could see where this was going.

Most of the TF vpc modules I see expect to create a new one….

Should I:

• terraform import the VPC resource,

• manually replace vpc.vpc_id with something in var.vpc_id

• stop overthinking this and use some kops flag I don’t yet know about

?

Usual approach I follow with KOPS is to create VPC and other aws reosurces using terraform and then use kops to create the actual cluster (just like you are doing). I however split the subnets cidr’s accordingly when creating the VPC from tf. In your case, I guess if you are planning to use the vpc, terraform import might help. Not sure if this helps you though

@grv Thanks for the validation, I’m more confident now. So I guess could maybe run TF against the existing VPC and have it carve out the subnets, security-groups, etc, then run kops to stich them together into a cluster.

That would make sense, but I have a feeling you might run into some kind of trouble while trying to play around existing cloud resources using tf. Again, saying maybe

Hi, has anyone deployed EKS on me-south-1 and successfully interacted with the cluster via kubectl getting credentials using aws sts assume-role ?

2020-01-30

Yes, same as other regions…

Looking for simple condition to set in EC2 instance template with ALB, that will hold the 2nd (HA instance being deployed) so I can get the first up with some manual config running then remove condition to lauch the instanceB

2020-01-31

Hello, I’ve used the AWS landing zone pattern for a while but just wondering how others use the shared account for things like Vault? I.e put Vault in here or separate account? Also usage pattern - 1 Vault for dev/prod or 2 separate installations…Just wondering what others have done…cheers

I’ve always gone with a Vault cluster per environment

Deployed into the same account unless it is a break-glass type scenario

That’s an option, I was thinking we will have multiple prod accounts so a shared prod Vault cluster would be another way

Hey people

I have a question for AWS gurus

How do I use the PutOrganizationConfigRule API in Landing Zone to create an organization config rule?

https://docs.aws.amazon.com/config/latest/APIReference/API_PutOrganizationConfigRule.html

Adds or updates organization config rule for your entire organization evaluating whether your AWS resources comply with your desired configurations. Only a master account can create or update an organization config rule.

Going to try this channel as well. Does anyone know if ECS provides an SNS topic to subscribe to events like updating service, tasks starting/stopping, autoscaling events?

I don’t believe so

Does https://docs.aws.amazon.com/AmazonECS/latest/developerguide/ecs_cwet.html get you what you need?

In this tutorial, you set up a simple AWS Lambda function that listens for Amazon ECS task events and writes them out to a CloudWatch Logs log stream.

I guess, if that’s the only option. Thanks

Looks like it only gives you “ECS Task State Change”, “ECS Container Instance State Change” triggers

Unless they’ve adding something since I last looked. You subscribe a lambda to the event. The lambda needs to parse to figure out what the change was, then run your logic for that change type.

At that point whether you send to SNS or do anything else is up to you

Thanks @joshmyers @Steven

Not great. It needs finer events

How are people dealing with upgrading minor versions of Kubernetes in EKS in large clusters?